You now know what collections and sequences are. The real skill is knowing which one to reach for in a given situation. Using a sequence where a collection would be faster — or a collection where a sequence would save memory — both lead to worse code. This guide gives you a clear, practical decision framework backed by real Android scenarios.

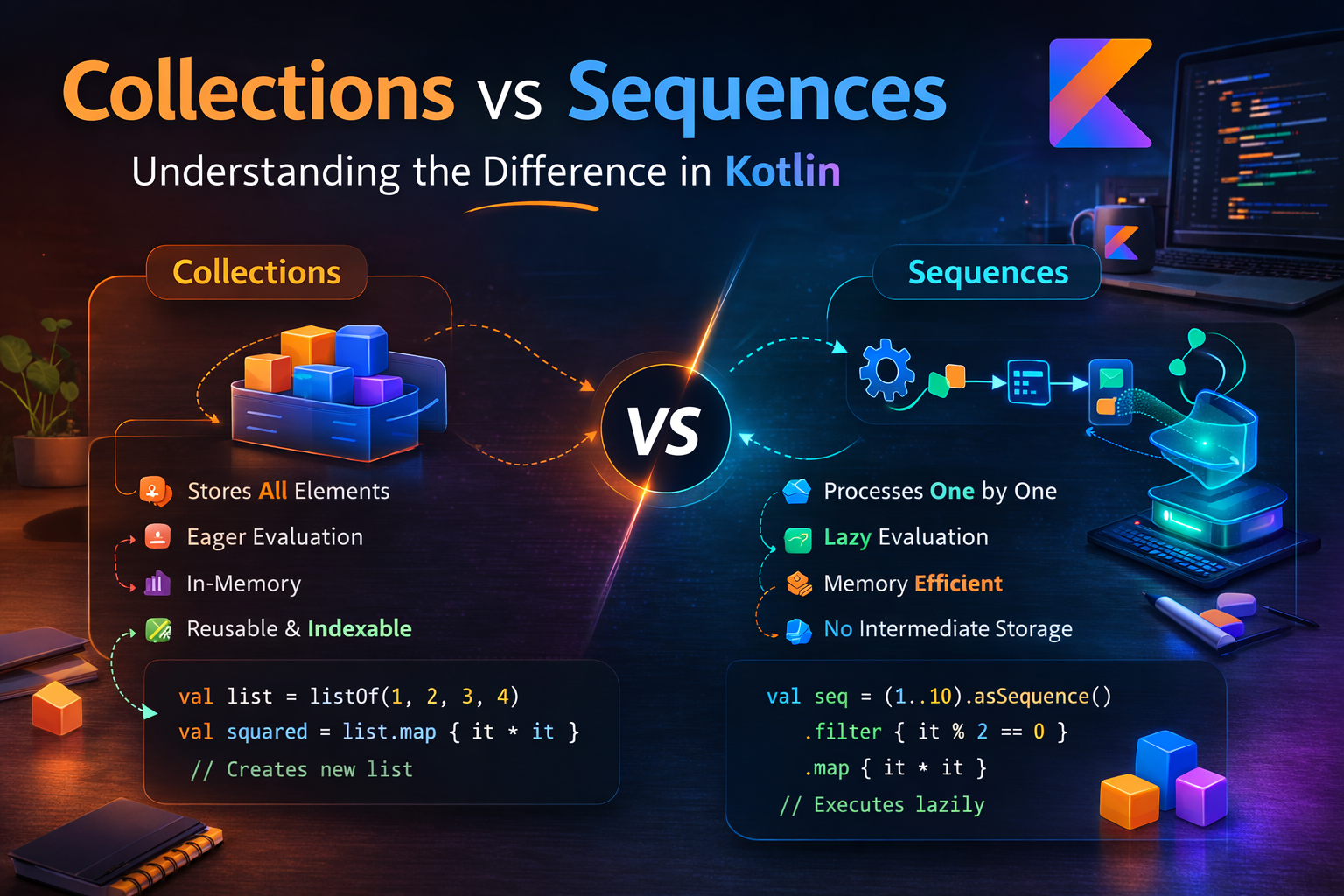

The Core Difference in One Sentence

Collections are eager — every operation processes all elements immediately and returns a new collection.

Sequences are lazy — operations build a pipeline that only runs when a terminal operation requests results.

val numbers = listOf(1, 2, 3, 4, 5, 6, 7, 8, 9, 10)

// Collection — two full passes, two intermediate lists created

val collectionResult = numbers

.map { it * 2 } // pass 1: [2,4,6,8,10,12,14,16,18,20] — all 10

.filter { it > 10 } // pass 2: [12,14,16,18,20] — all 10 again

// Result: [12, 14, 16, 18, 20]

// Sequence — one pass, no intermediate lists, stops early if possible

val sequenceResult = numbers.asSequence()

.map { it * 2 } // no work yet

.filter { it > 10 } // no work yet

.toList() // now processes — one element at a time

// Result: [12, 14, 16, 18, 20]When to Use Collections

Small to medium datasets

Sequences have overhead — lambda dispatch, iterator wrappers, pipeline setup. For small lists this overhead costs more than the savings:

// ✅ Collection — fast for small lists, no unnecessary overhead

val topCategories = articles

.groupBy { it.category }

.mapValues { (_, list) -> list.size }

.entries

.sortedByDescending { it.value }

.take(5)

.map { it.key }

// ❌ Sequence here — adds overhead, no benefit for ~50 articles

val topCategories = articles.asSequence()

.groupBy { it.category } // groupBy is a terminal op on sequences anyway

...When you need the result multiple times

// ✅ Collection — compute once, use many times

val publishedArticles = articles.filter { it.isPublished }

val count = publishedArticles.size // use 1

val titles = publishedArticles.map { it.title } // use 2

val latest = publishedArticles.maxByOrNull { it.publishedAt } // use 3

// ❌ Sequence — re-runs the pipeline each time a terminal op is called

val publishedSeq = articles.asSequence().filter { it.isPublished }

val count = publishedSeq.count() // runs pipeline once

val titles = publishedSeq.map { it.title }.toList() // runs pipeline again

val latest = publishedSeq.maxByOrNull { it.publishedAt } // runs pipeline againWhen you need groupBy, associate, or partition

These operations return a Map or Pair — they're terminal by nature and consume the entire collection anyway. The lazy benefit of sequences is lost:

// ✅ Collection — groupBy consumes everything regardless, no sequence benefit

val byCategory = articles.groupBy { it.category }

val (published, drafts) = articles.partition { it.isPublished }

val articleMap = articles.associateBy { it.id }

// ❌ Sequence adds overhead here with no benefit

val byCategory = articles.asSequence().groupBy { it.category } // still processes allWhen order of intermediate results matters for debugging

// ✅ Collection — each step produces a concrete inspectable list

val step1 = articles.filter { it.isPublished }

println("After filter: ${step1.size}") // easy to inspect intermediate state

val step2 = step1.map { it.title }

println("After map: $step2")

// Sequences don't produce intermediate results — harder to debug step by stepWhen to Use Sequences

Large collections with early termination

The biggest win for sequences — when you only need a few results from a large dataset:

// ✅ Sequence — 100,000 articles, only need first 10 matches

fun getTopUnreadArticles(articles: List<Article>, readIds: Set<String>): List<Article> {

return articles.asSequence()

.filter { it.id !in readIds }

.filter { it.isPublished }

.sortedByDescending { it.viewCount }

.take(10) // stops after 10 found — never processes the rest

.toList()

}

// ❌ Collection — creates two intermediate lists of 100,000 each

fun getTopUnreadArticles(articles: List<Article>, readIds: Set<String>): List<Article> {

return articles

.filter { it.id !in readIds } // 100,000 → maybe 80,000

.filter { it.isPublished } // 80,000 → maybe 70,000

.sortedByDescending { it.viewCount } // sorts all 70,000

.take(10) // finally takes 10

}Many chained operations on large data

Each additional operation on a collection creates another full intermediate list. Sequences create none:

// ✅ Sequence — 5 operations, 0 intermediate lists

val result = allArticles.asSequence()

.filter { it.isPublished }

.filter { !it.isDeleted }

.filter { it.category in selectedCategories }

.map { it.toDisplayModel() }

.sortedByDescending { it.date }

.toList()

// ❌ Collection — 5 operations, 4 intermediate lists in memory

val result = allArticles

.filter { it.isPublished } // intermediate list 1

.filter { !it.isDeleted } // intermediate list 2

.filter { it.category in selectedCategories } // intermediate list 3

.map { it.toDisplayModel() } // intermediate list 4

.sortedByDescending { it.date } // final listInfinite or very large streams

// ✅ Sequence — infinite stream, only compute what's needed

val firstPrimesOver100 = generateSequence(2) { it + 1 }

.filter { n -> (2 until n).none { n % it == 0 } }

.filter { it > 100 }

.take(5)

.toList()

// [101, 103, 107, 109, 113]

// Cannot do this with a collection — you can't create an "infinite list"File or stream processing

// ✅ Sequence — process 1GB log file without loading it into memory

fun countCriticalErrors(logFile: File): Int {

return logFile.bufferedReader()

.lineSequence()

.filter { "CRITICAL" in it }

.count()

}Direct Comparison — Same Task, Both Approaches

Scenario 1: Get first 5 published articles from large list

val articles = List(50_000) { Article(id = "$it", isPublished = it % 3 == 0) }

// Collection — processes all 50,000 before taking 5

val result1 = articles

.filter { it.isPublished } // ~16,666 items processed, intermediate list created

.take(5) // takes from the 16,666

// Sequence — stops after finding 5 matches (~15 items processed)

val result2 = articles.asSequence()

.filter { it.isPublished }

.take(5)

.toList()

// Winner: Sequence — drastically fewer operationsScenario 2: Group 50 articles by category

val articles = listOf(/* 50 articles */)

// Collection — direct, no overhead, result cached

val byCategory = articles.groupBy { it.category }

// Sequence — adds overhead, groupBy still processes everything

val byCategory = articles.asSequence().groupBy { it.category }

// Winner: Collection — groupBy processes all anyway, sequence overhead is wastedScenario 3: Transform all 1,000 articles to display models

val articles = listOf(/* 1,000 articles */)

// Collection — single map operation, simple and fast

val displayModels = articles.map { it.toDisplayModel() }

// Sequence — adds overhead, no early termination possible here

val displayModels = articles.asSequence().map { it.toDisplayModel() }.toList()

// Winner: Collection — when you need ALL results, sequence overhead isn't worth itScenario 4: Filter + map + take on 10,000 items

val articles = List(10_000) { Article(id = "$it") }

// Collection — two full passes, two intermediate lists

val result = articles

.filter { it.id.toInt() % 7 == 0 } // ~1,428 items

.map { it.title.uppercase() } // ~1,428 items

.take(20)

// Sequence — one pass, stops after 20 matches (~140 items processed)

val result = articles.asSequence()

.filter { it.id.toInt() % 7 == 0 }

.map { it.title.uppercase() }

.take(20)

.toList()

// Winner: Sequence — clear performance and memory advantageDecision Framework

| Situation | Use | Reason |

|---|---|---|

| Less than ~1,000 elements | Collection | Sequence overhead not worth it |

| Need result multiple times | Collection | Sequence re-runs pipeline each time |

Using groupBy, partition, associate |

Collection | These process everything anyway |

| Debugging intermediate steps | Collection | Each step produces inspectable result |

| Need all results from all elements | Collection | No early termination benefit |

Large list + early termination (first, take) |

Sequence | Stops as soon as result is found |

| Many chained ops on large data | Sequence | Zero intermediate lists |

| Infinite or unbounded stream | Sequence | Collections can't be infinite |

| File / stream processing | Sequence | Never loads full content into memory |

Single map or filter only |

Collection | No multi-step benefit for sequences |

Real Android ViewModel Example

class ArticleViewModel(private val repository: ArticleRepository) : ViewModel() {

private val allArticles = MutableStateFlow<List<Article>>(emptyList())

// ✅ Collection — small result set, used multiple times in UI

val categories: StateFlow<List<String>> = allArticles.map { articles ->

articles

.map { it.category }

.distinct()

.sorted() // small list, collection is fine

}.stateIn(viewModelScope, SharingStarted.Lazily, emptyList())

// ✅ Sequence — large list, multiple filters, early take

fun getFilteredArticles(

category: String,

query: String,

limit: Int = 50

): List<Article> {

return allArticles.value.asSequence()

.filter { category == "All" || it.category == category }

.filter { query.isEmpty() || it.title.contains(query, ignoreCase = true) }

.filter { it.isPublished && !it.isDeleted }

.sortedByDescending { it.publishedAt }

.take(limit)

.toList()

}

// ✅ Collection — need full grouped result for section headers

fun getArticlesByCategory(): Map<String, List<Article>> {

return allArticles.value

.filter { it.isPublished }

.groupBy { it.category } // groupBy processes all — no sequence benefit

}

// ✅ Sequence — find first unread article quickly

fun getNextUnreadArticle(readIds: Set<String>): Article? {

return allArticles.value.asSequence()

.filter { it.id !in readIds }

.filter { it.isPublished }

.firstOrNull() // stops at first match

}

}Common Mistakes to Avoid

Mistake 1: Using sequences for everything

// ❌ Over-engineering — sequence adds overhead for 10 items

val result = listOf("a", "b", "c", "d", "e")

.asSequence()

.map { it.uppercase() }

.filter { it != "C" }

.toList()

// ✅ Plain collection is cleaner and faster here

val result = listOf("a", "b", "c", "d", "e")

.map { it.uppercase() }

.filter { it != "C" }Mistake 2: Not collecting a sequence when reuse is needed

// ❌ Pipeline runs 3 times — wasteful

val seq = articles.asSequence().filter { it.isPublished }

val count = seq.count()

val list = seq.toList()

val first = seq.firstOrNull()

// ✅ Collect once, reuse the list

val published = articles.filter { it.isPublished }

val count = published.size

val first = published.firstOrNull()Mistake 3: Forgetting sequences don't cache — calling toList() to cache

// ✅ If you need to reuse sequence results, convert to list explicitly

val expensiveResults = articles.asSequence()

.filter { expensiveCheck(it) } // expensive per-element work

.toList() // run once, cache as List

// Now use expensiveResults multiple times without re-running the pipeline

val count = expensiveResults.size

val titles = expensiveResults.map { it.title }Mistake 4: Using sequence with a single operation

// ❌ One operation — sequence overhead with zero benefit

val titles = articles.asSequence().map { it.title }.toList()

// ✅ Just use collection directly

val titles = articles.map { it.title }Summary

- Collections are eager — each operation runs immediately on all elements, creates intermediate lists

- Sequences are lazy — operations define a pipeline that runs only when a terminal operation is called

- Use collections for small datasets, when results are reused, for

groupBy/partition, and when a single operation suffices - Use sequences for large datasets with early termination, many chained operations, infinite streams, and file processing

- The crossover point is roughly 1,000 elements — below that, collections are faster

- Sequences shine most with

first(),take(n),find()— operations that stop early - If you need to reuse sequence results, call

toList()to cache them as a collection - A single

maporfilteron any size list doesn't benefit from sequences — use collections - The real win is multiple chained operations + early termination on large data — that's when sequences truly pay off

The rule of thumb: start with collections — they're simpler, more debuggable, and fast enough for most cases. Reach for sequences when you're dealing with large data, many operations, or infinite streams. Profile before optimizing — the right choice depends on your actual data size and usage pattern.

Happy coding!

Comments (0)